Key takeaways

- AI model selection is optimized through a meta model approach, enhancing cost, latency, and accuracy.

- Companies are increasingly evaluating the ROI of token usage due to rising costs.

- Jamba combines transformer and Mamba architectures for efficient long-sequence processing.

- Open weight models like Jamba offer flexibility and accessibility for enterprises.

- The Maestro system can utilize any AI model, optimizing deployment through orchestration.

- Automation in AI model selection can alleviate enterprise complexities.

- No single AI model can address all enterprise needs, necessitating diverse solutions.

- Systems can learn to activate AI models in a cost-effective manner.

- Cost and accuracy trade-offs are simulated for better model selection in enterprises.

- AI orchestration platforms enhance decision-making and efficiency in enterprise AI applications.

- The focus on ROI in token usage reflects a shift towards efficiency in enterprise strategies.

- Advanced techniques in AI model selection ensure the best answers are obtained.

- The combination of architectures in Jamba influences its performance in practical applications.

- Open weight models like Jamba are crucial for resource-limited deployments.

- Intelligent systems automate the selection and optimization of AI models for enterprise tasks.

Guest intro

Ori Goshen is Co-founder and Co-CEO of AI21 Labs, one of the world’s leading enterprise AI systems and foundation models companies. He has scaled AI21 to 10 million users and is focused on model orchestration as the key differentiator for enterprise AI adoption. Goshen’s work centers on optimizing token costs and building proprietary orchestration platforms like Maestro to help enterprises navigate the competitive AI landscape.

The Maestro platform’s role in AI optimization

- The Maestro platform uses a meta model to optimize AI model selection based on cost, latency, and accuracy.

-

We’ve built a platform called maestro which is basically based on what we call a meta model… it tries essentially to predict what would be a successful call at what cost or at what latency.

— Ori Goshen

- Sophisticated techniques in Maestro include multiple calls to different models to ensure the best answer.

-

It can call a model multiple times and try to fetch the best answer or try to call different models at once and try to fetch the best answer.

— Ori Goshen

- Understanding the concept of a meta model is crucial for appreciating its application in AI orchestration.

- The platform enhances decision-making in AI, critical for enterprise efficiency.

- Maestro’s orchestration system can utilize any model, optimizing deployment through a proprietary internal model.

-

The Maestro is an orchestration system it can use any model… it just has an internal model that’s the meta model that learns these probabilities and know how to route and how to activate these.

— Ori Goshen

The impact of rising token costs on enterprise strategies

- Companies are increasingly focused on the ROI of token usage as costs rise.

-

Cost is definitely a consideration and these days you know token bills are going through the roof… it gets into the phase that it’s not just about the quality of the tokens but actually whether you know the roi is there whether it’s actually being produced efficiently.

— Ori Goshen

- Financial pressures are leading companies to evaluate the efficiency of token usage.

- The shift towards evaluating token ROI reflects a broader trend in enterprise strategies.

- Rising costs are pushing enterprises to optimize their token-related processes.

- Efficiency in token usage is becoming a priority for enterprises.

- The evaluation of token ROI is critical for maintaining financial sustainability.

- Companies are adapting their strategies to address the challenges posed by rising token costs.

The Jamba model’s architectural advancements

- The Jamba model combines transformer architecture with a new architecture called Mamba for efficient long sequence processing.

-

The interesting part of jamba which is a combination of two architecture one is attention the transformer based architecture and the second is mamba which is a new architecture it’s a highly efficient it’s really good at processing long sequences.

— Ori Goshen

- Jamba’s architectural innovations influence its performance in practical applications.

- The combination of architectures in Jamba enhances its processing capabilities.

- Understanding AI model architectures is crucial for appreciating Jamba’s advancements.

- Jamba’s efficiency in processing long sequences is a significant technical achievement.

- The integration of Mamba architecture sets Jamba apart in terms of processing efficiency.

- Jamba’s design reflects a focus on optimizing AI model performance.

The accessibility and efficiency of open weight models

- Jamba is a highly efficient open weight model that can be used and forked by anyone.

-

Jamba is a highly efficient model that they can use anybody can take Jamba and then fork it or use it without going through your company.

— Ori Goshen

- Open weight models like Jamba offer flexibility and accessibility for enterprises.

- The significance of open weight models lies in their implications for resource-limited deployments.

- Jamba’s accessibility is crucial for enterprises with limited resources.

- The efficiency of open weight models is a key factor in their adoption.

- Jamba’s open weight nature enhances its utility in diverse enterprise contexts.

- The ability to fork and use Jamba without restrictions is a major advantage for users.

Automating AI model selection in enterprises

- The process of selecting and optimizing AI models for enterprise tasks can be automated with intelligent systems.

-

The idea here is basically say hey, you know what this process can be automated you just need an intelligent system that kind of learns.

— Ori Goshen

- Automation can alleviate the complexities of AI model selection in enterprises.

- Intelligent systems play a crucial role in automating AI model selection.

- The challenges of AI model selection can be addressed through automation.

- Enterprises benefit from automated systems that optimize AI model selection.

- Automation enhances the efficiency of AI model deployment in enterprises.

- Intelligent systems learn and adapt to optimize AI model selection processes.

The necessity of diverse AI models for enterprise needs

- There is no single AI model that can address all enterprise needs.

-

There is no one model to rule them all it’s actually very interesting to see the coverage like how many of the tasks be dealt with different models.

— Ori Goshen

- The diversity of AI models is essential for meeting enterprise requirements.

- Tailored solutions are necessary due to the complexity of AI deployment in enterprises.

- Enterprises require a variety of AI models to address different tasks.

- The need for diverse AI models highlights the complexity of enterprise applications.

- No single model can fulfill all the needs of an enterprise, necessitating multiple models.

- The diversity of AI models enhances their applicability in enterprise settings.

Learning to activate AI models cost-effectively

- The system learns to activate new AI models in a cost-effective manner.

-

The system keeps learning how to use new models and how to basically activate them in the most cost effective way.

— Ori Goshen

- Cost-effective activation of AI models is crucial for enterprise efficiency.

- The system’s adaptability enhances its utility in integrating new AI models.

- Learning to activate AI models efficiently is a key operational mechanism.

- Enterprises benefit from systems that optimize the cost of AI model activation.

- The ability to activate models cost-effectively is a significant advantage for enterprises.

- The system’s learning capability ensures efficient integration of AI models.

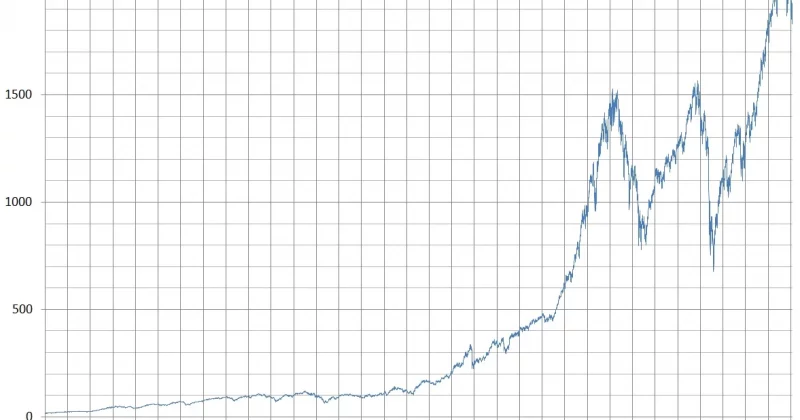

Simulating cost and accuracy trade-offs in model selection

- Our system simulates cost and accuracy trade-offs for model selection in enterprises.

-

Our system actually simulates what would be the cost accuracy or latency accuracy trade offs… our system will give you this chart and what you see on the chart on the left side on the x axis is the average cost and on the y axis is the success rate.

— Ori Goshen

- Simulating trade-offs is crucial for optimizing model selection in enterprises.

- The system provides insights into the cost and accuracy implications of model choices.

- Enterprises benefit from understanding the trade-offs involved in model selection.

- The simulation of trade-offs enhances decision-making in AI model deployment.

- The system’s ability to simulate trade-offs is a valuable tool for enterprises.

- Optimizing model selection through trade-off simulation improves enterprise efficiency.

Disclosure: This article was edited by Editorial Team. For more information on how we create and review content, see our Editorial Policy.

1 hour ago

2

1 hour ago

2

English (US) ·

English (US) ·